WORLD

ACTION

MODEL

Learning physical intelligence through observation and interaction — enabling robots to understand and act in the real world, and generalize across tasks, environments, and embodiments.

From Physical Interaction

to World UNDERSTANDING

Learning physical intelligence through real-world interaction — enabling robots to manipulate, adapt, and generalize across tasks, environments, and embodiments.

Large-Scale Observation

Diverse robot operation videos, human demonstrations, and proprioceptive recordings — providing broad priors about objects, motion, and environmental dynamics.

Human Demonstrations

Human Demonstrations Videos

Videos Language

Language Proprioception

ProprioceptionTactile-Augmented Simulation & Training

Simulation generates diverse interaction variants at scale — augmented with tactile signal modeling to better reflect real contact dynamics.

Real-world tactile data from Sentra continuously recalibrates simulation parameters, ensuring virtual training remains grounded in physical reality.

Real Interaction Data

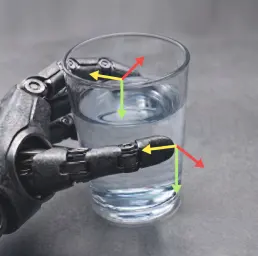

High-fidelity tactile interaction data capturing force dynamics, contact transition, and material response — the physical ground truth that vision cannot provide.

Contact Force Maps

Contact Force Maps Material Response

Material Response Slip Detection

Slip Detection 3D Force Signals

3D Force SignalsWorld Action Models

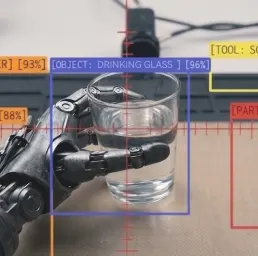

PREDICTIONS GROUNDED IN HOW THE WORLD CAN BE ACTED UPON

NOT JUST HOW IT LOOKS

Inputs

Vision

Vision Tactile

Tactile Language

Language Proprioception

ProprioceptionOutputs

Physical Future Prediction

Physical Future Prediction Continuous Action Policy

Continuous Action PolicyIn contact-rich tasks, tactile signals provide continuous feedback on force, slip, and material response — revealing actionable affordances that guide stable execution through every phase of contact.

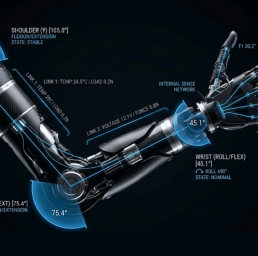

Physical interaction follows causal laws — not visual patterns. By grounding models in tactile physics, skills learned in one context transfer to unseen objects and environments without task-specific retraining.

Force, compliance, and contact dynamics are the universal language of physical interaction. Models trained on one platform transfer across robot morphologies — reducing deployment cost for every new embodiment.

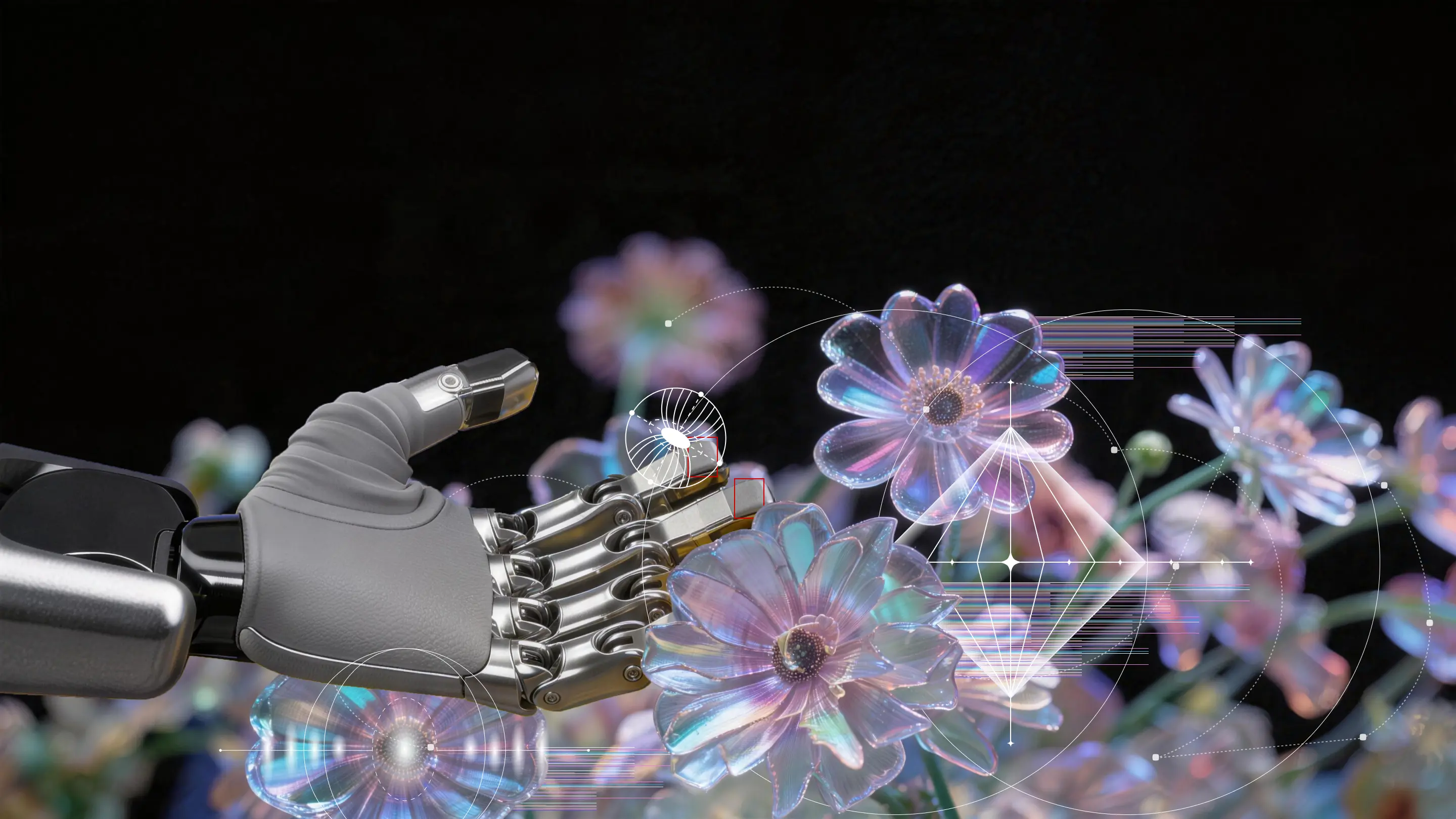

OPEN

INTENT-DRIVEN

MANIPULATION

Robots to perceive, plan, and act from intent — without scripts or task-specific programming.

ADAPTIVE

TACTILE INTERACTION

Millisecond tactile feedback stabilizes manipulation behavior in real time.

BUILT-IN SKILL

PRIMITIVE

Composable skill units designed for modular scaling across tasks.

OPEN SKILL

ECOSYSTEM

A shared skill library where manipulation intelligence accumulates, is reused, and continuously evolves across deployments.

TURNING

PHYSICAL

INTELLIGENCE

INTO REAL–

WORLD IMPACT